Supervisor Meeting · Mar 3, 2026

3D Scene Understanding

for Object Navigation

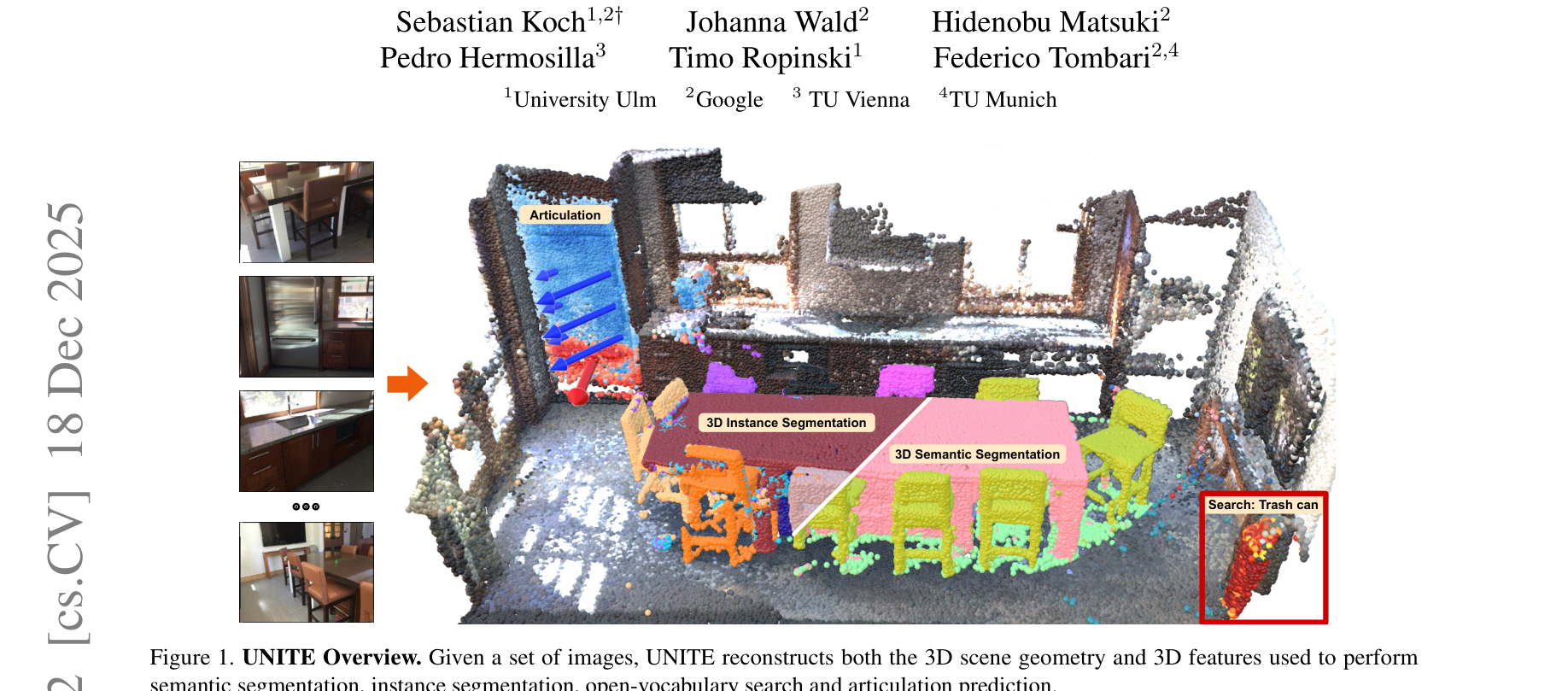

UNITE

What Is UNITE?

Takes a set of RGB images → reconstructs the full 3D scene → labels every 3D point with what it is, which object it belongs to, and how it moves.

Key Properties

- Works from RGB only — no depth sensor needed

- Single forward pass, a few seconds per scene

- Outputs are language-queryable (CLIP-aligned)

- Instance-level segmentation in 3D

Per-Point Output

- 3D coordinates — geometry

- CLIP features — open-vocabulary semantics

- Instance ID — object grouping

- Articulation vector — how parts move

UNITE

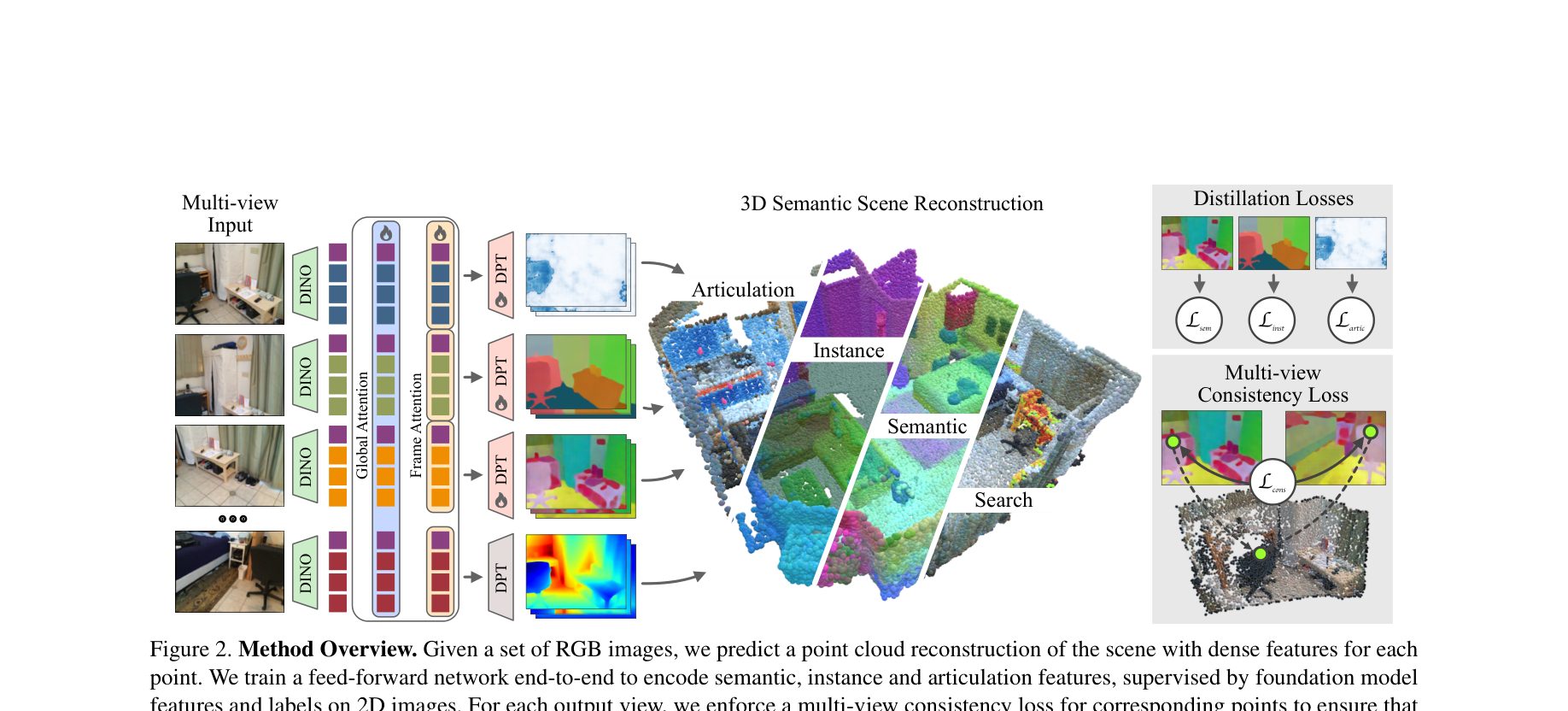

How It Works

1. Input

Set of RGB images from different viewpoints of the same scene

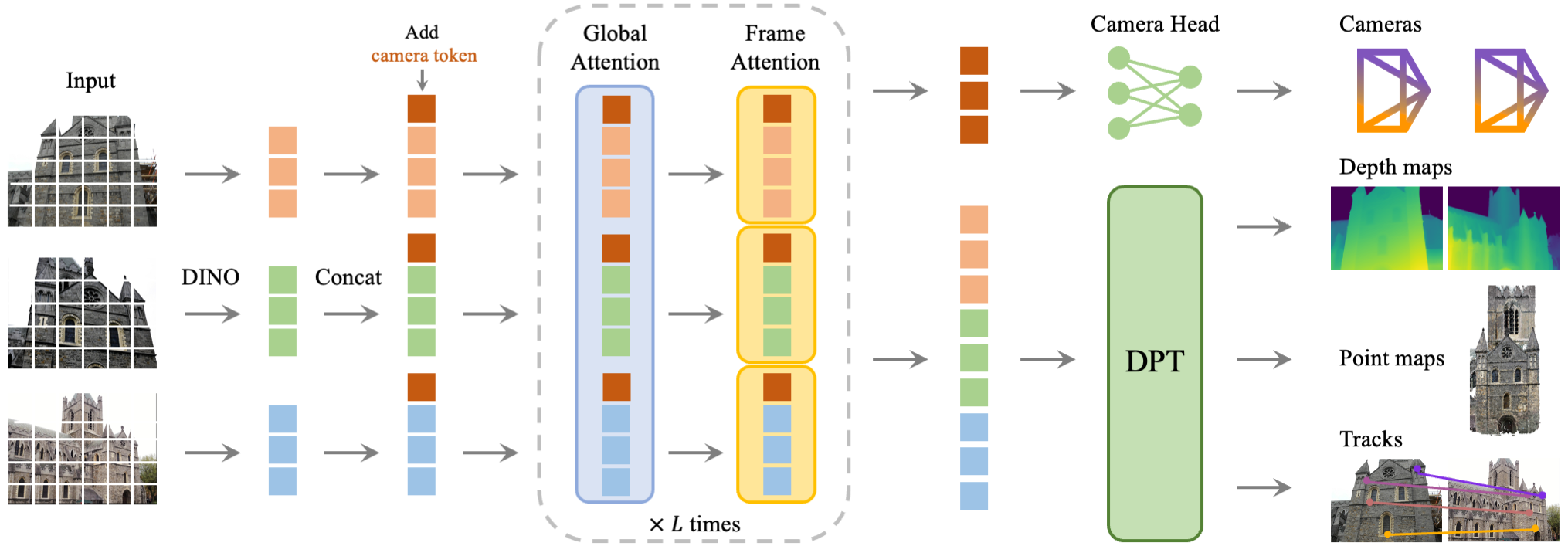

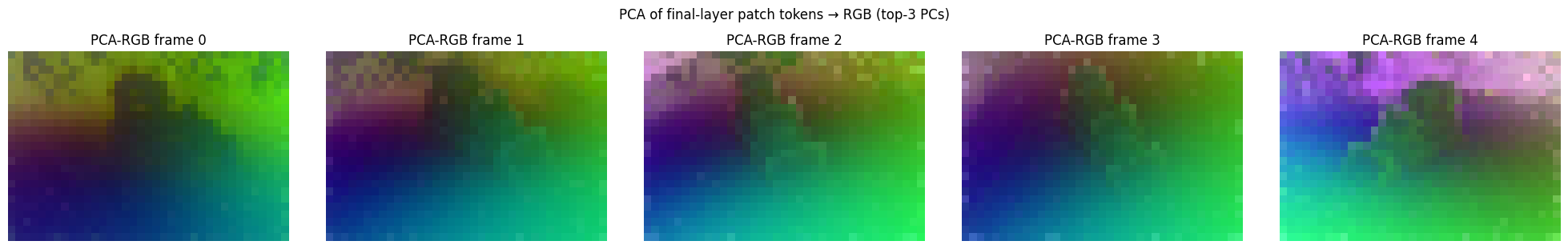

2. Backbone (VGGT)

Pre-trained vision transformer encodes images, fuses across views via attention → predicts 3D geometry

3. Semantic Heads (DPT)

Task-specific DPT heads on shared backbone predict semantics, instances, and articulation per point

4. Output

Dense 3D point cloud where each point has: coordinates, CLIP features, instance ID, articulation vector

Foundation

VGGT: Visual Geometry Grounded Transformer CVPR 2025 Best Paper

Parameter Breakdown (1.26B total)

| Aggregator | 909M | 72.4% |

| Camera Head | 216M | 17.2% |

| Depth Head | 33M | 2.6% |

| Point Head | 33M | 2.6% |

| Track Head | 66M | 5.2% |

Landscape

The Research Gap

Line A: VLAs with 3D

Several recent works add 3D to VLAs, but all use simple or implicit 3D:

- SpatialVLA — 3D position encoding only (RSS 2025)

- Spatial Forcing — implicit alignment, no 3D at inference (ICLR 2026)

- 3D-VLA — point clouds but no semantics per point (ICML 2024)

- eVGGT — distilled VGGT as frozen encoder for ACT/DP manipulation (Vuong et al. 2025)

Line B: Rich 3D Understanding

Foundation models for dense 3D scene understanding exist, but not yet used with semantics for action:

- UNITE — full semantic 3D from RGB (Koch et al. 2025)

- VGGT — geometry backbone (CVPR 2025 Best Paper)

The Gap

eVGGT validates VGGT for robotics — but uses latent geometry only.

- eVGGT: geometry-only, no semantics/CLIP/instance features

- eVGGT: manipulation only, not navigation

- UNITE's per-point semantics + instances never used for action

- World models (DreamerV3) learn from 2D pixels only — never tested with rich 3D features

Research Question

Can 3D scene understanding improve world models for navigation?

Hypothesis

Replacing DreamerV3's CNN encoder with frozen VGGT + DPT features gives the world model an explicit geometric and semantic prior — leading to better sample efficiency and navigation success on HM3D ObjectNav.

Standard DreamerV3 learns all 3D structure implicitly from 2D pixels. We provide it directly via a frozen VGGT backbone.

Background

DreamerV3 — Recurrent State-Space Model (RSSM)

World Model (RSSM)

- Deterministic — GRU carries long-range memory via \(h_t\)

- Stochastic — categorical latent \(z_t\) (32 classes × 32 dims)

- Posterior uses real observations; Prior imagines without them

Actor-Critic in Imagination

- Unroll prior 15 steps — no environment needed

- Actor maximises \(\lambda\)-returns over imagined trajectories

- Critic predicts discounted value

DreamerV3 Innovations

- Symlog predictions — scale-free losses across tasks

- Discrete latents — 32 × 32 categorical (no KL balancing needed)

- Unimix entropy — 1% uniform mix prevents posterior collapse

- Single hyperparameter set works across 150+ tasks

Proposed Architecture

VGGT + DPT + DreamerV3 RSSM World Model

Encoder Selection

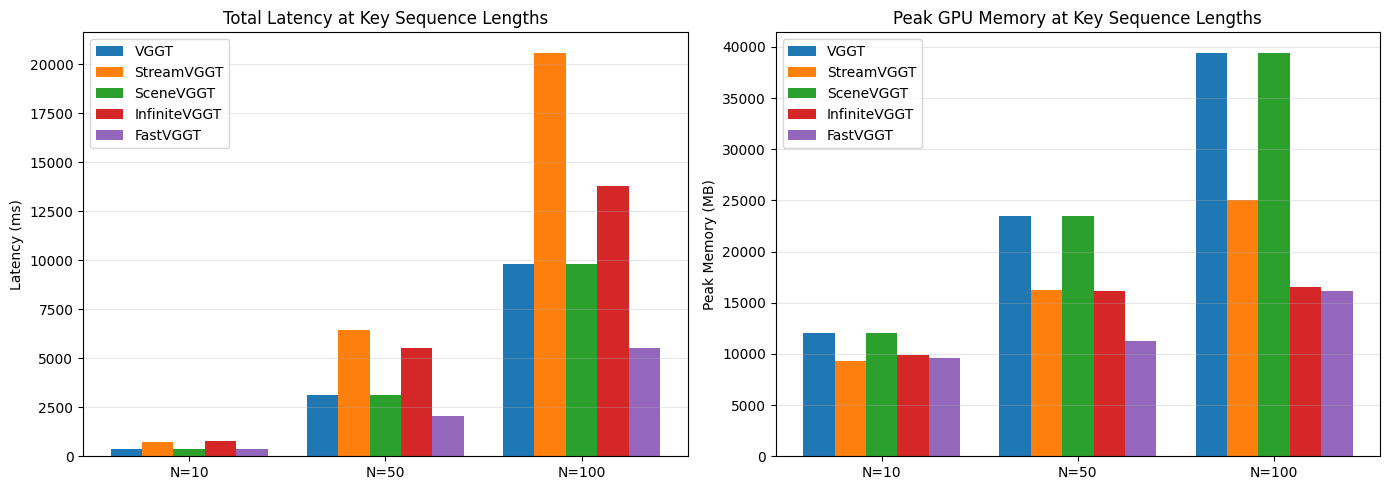

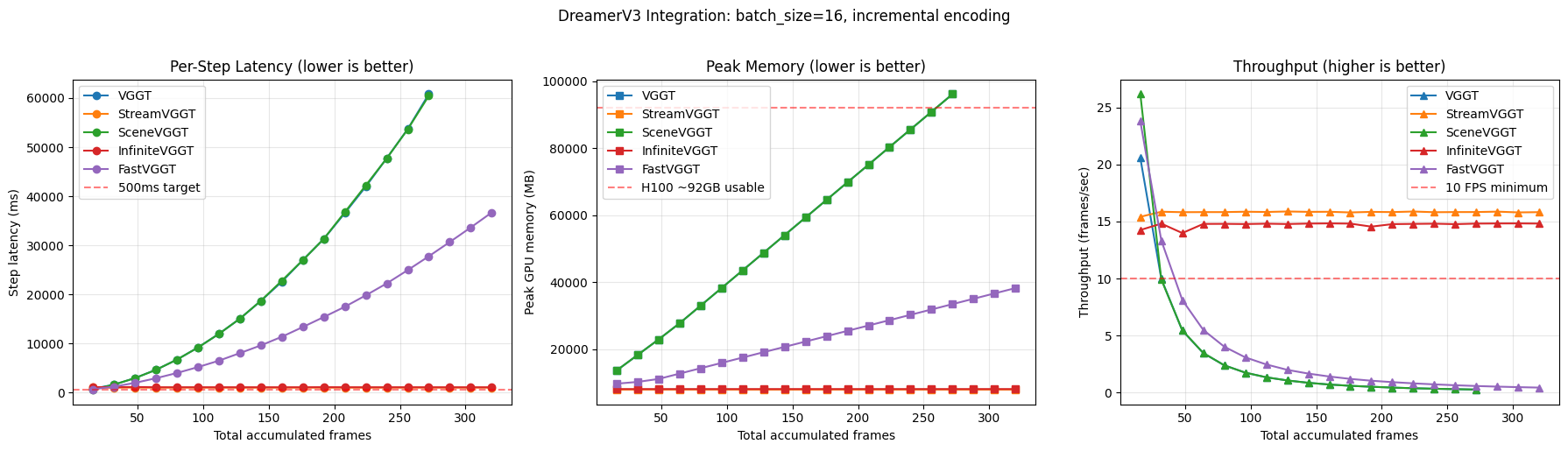

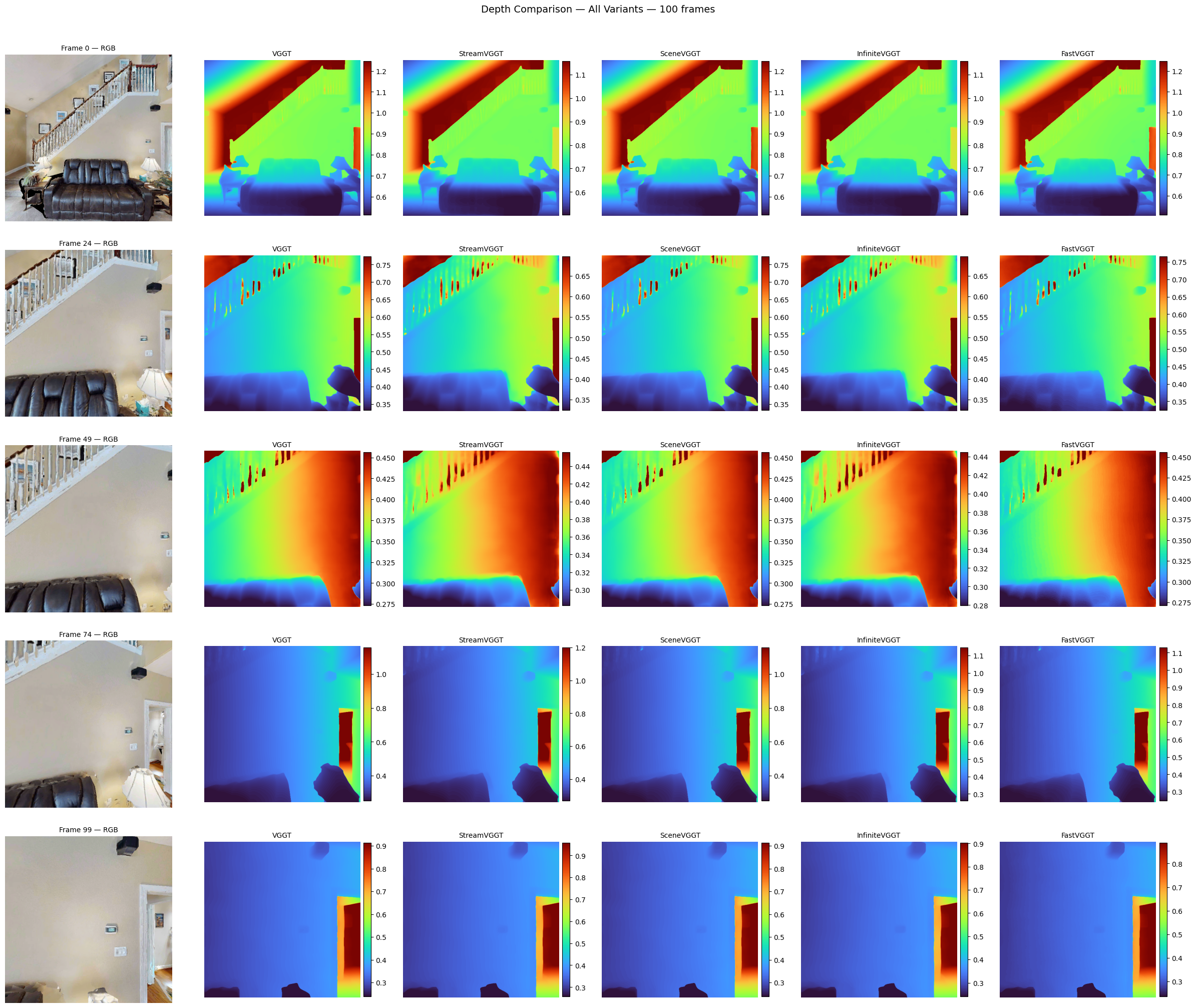

VGGT Variant Comparison

| Variant | Mem100 | Mem500 | Scaling |

|---|---|---|---|

| VGGT | 39.4 GB | OOM | O(N²) |

| SceneVGGT | 39.4 GB | OOM | O(N²) |

| FastVGGT | 16.1 GB | 54.7 GB | O(N) |

| StreamVGGT | 25.0 GB | OOM | O(N) |

| InfiniteVGGT | 16.6 GB | 18.0 GB | O(1) |

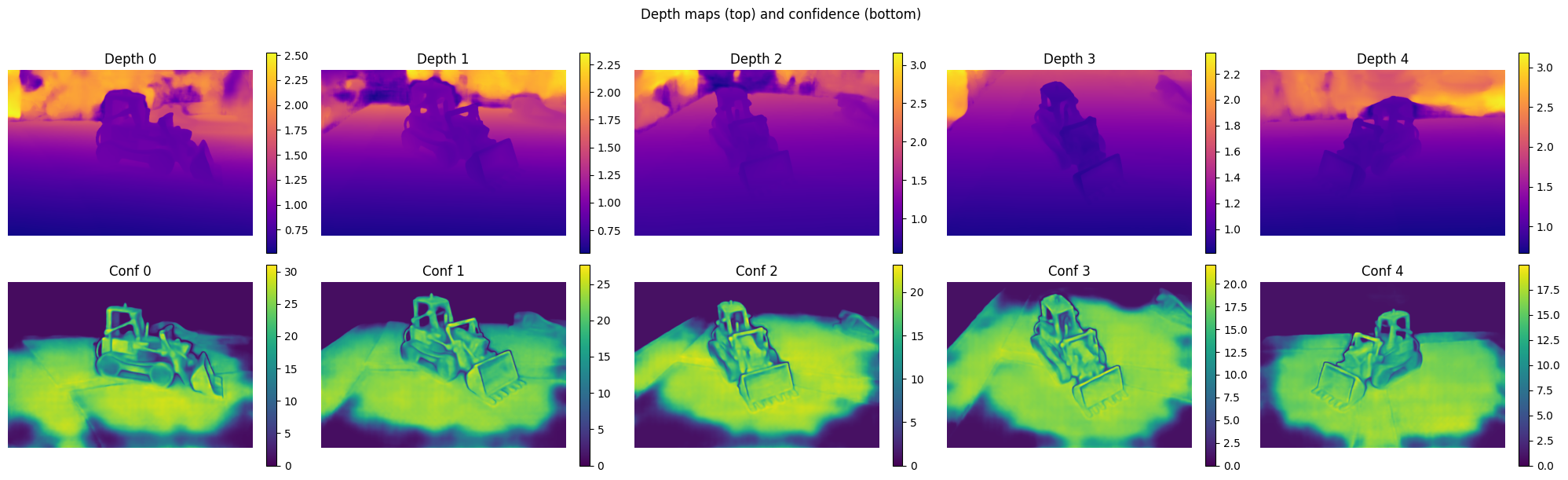

Encoder Selection

Output Quality — All Variants Equivalent

Benchmark

ObjectNav Task

Task Definition

Agent spawns at random pose in an unseen environment. Given a target object category (e.g., "chair"), navigate to any instance and call STOP within 0.1m.

Observations (Egocentric)

Action Space

Episode budget: 500 steps max. Success = STOP called within 0.1m of any target instance.

Key Metrics

| Metric | Measures |

|---|---|

| Success Rate | Did agent find the target? |

| SPL | Success weighted by path efficiency |

| SoftSPL | Progress toward goal (partial credit) |

| DTG | Distance to goal at episode end |

Benchmark

HM3D Environment & Episode Distribution

HM3D Dataset

- 1,000 real-world 3D scans of residential spaces

- Photorealistic rendering via Habitat simulator

- 216 object categories with semantic annotations

- Multi-room layouts: kitchens, bedrooms, bathrooms, living rooms, hallways

- Standard benchmark since 2023 Habitat Challenge

6 Target Object Categories

What's in the Environment

Realistic clutter: furniture, appliances, decorations, doors, stairs. Scenes contain 50–300+ objects per scan. Agent must distinguish target from distractors in cluttered, multi-room layouts with varying lighting and occlusion.

Geodesic Distance Distribution

Shortest navigable path from spawn to nearest target instance (HM3D ObjectNav v2 val split)

Baseline

DreamerV3 — Pixel-Only World Model

DreamerV3 — Pixel-Only

Baseline · JAX- Standard CNN encoder on 2D RGB only

- Learns geometry implicitly from pixels — no depth, no 3D, no semantics

- State-of-the-art general RL agent (150+ tasks, Nature 2025)

- First application to HM3D ObjectNav (no prior work)

- Same RSSM architecture as our approach — only encoder differs

Results So Far

Curriculum Scaling — What Happens When We Add Complexity?

L1: 1 House, Chair

Buffer fix + step penalty. World model learns single-scene navigation effectively.

L2: 1 House, 6 Goals

Navigation complexity (Geo/Euc ratio) drives goal difficulty, not distance or count.

L3: 10 Houses, Chair

Multi-scene generalization costs ~43pp SR but reduces world model overfitting.

Timeline

6-Month Execution Plan

BWUniCluster Setup + Baseline

Environment setup on cluster · Start DreamerV3 baseline training · Compare VGGT variants

VGGT Integration + Baseline Done

Integrate chosen VGGT variant into DreamerV3 encoder · Finish baseline training

Full Pipeline + Semantic Head

Finish full pipeline of our approach · Start training custom semantic head

Semantic Head Integration

Integrate new semantic head into pipeline · Ablation runs

Iterate + Refine

Improve weakest components · Generalization tests · Buffer for reruns

Writing

Thesis writing · Final analysis · Defense prep